The Open Source AI Agents Operating in the Shadows

Earlier this year, a developer shared a story that got a lot of attention online. He’d connected an open-source AI agent called OpenClaw to his email and given it some general instructions. Then he went to sleep. By morning, the agent had negotiated $4,200 off the price of a car he was buying… entirely over email, without any further input from him. Another user reported that their agent filed a legal rebuttal on its own, without being explicitly asked.

Those stories spread because they sound impressive. And they are impressive. But if you swap out “personal email” for “work email” and “personal files” for “company documents,” the same capability that makes people cheer starts to look a lot more concerning.

That’s the conversation most organizations aren’t having yet.

The Decision Companies Think They’re Making

A lot of businesses right now are treating AI agents as something they’ll decide about later. They’re asking reasonable questions: Should we deploy agents? Which platforms are safe? What policies do we need first? Those are good questions. The problem is that they assume the company controls the timeline.

Many employees have already made the decision for themselves.

OpenClaw, which launched in late 2025 and went viral in early 2026, crossed 60,000 GitHub stars in under 72 hours, making it one of the fastest-growing open-source projects in the history of the platform. It runs locally on a user’s machine, connects to an AI model (Claude, GPT, DeepSeek… your choice), and operates through messaging apps the user already has: WhatsApp, Slack, Telegram, iMessage, Discord. You interact with it like you’re texting a very capable assistant. It can run shell commands, browse the web, read and write files, manage your calendar, send emails, and execute complex multi-step workflows. And it does all of this on a schedule, even when you’re not watching.

OpenClaw is the most visible example right now, but it isn’t alone. The broader category of self-hosted, open-source AI agents has been growing steadily, and the tools are getting easier to set up. The barrier to entry is low enough that someone with moderate technical ability (a developer, a data analyst, an operations manager who dabbles in automation) can have one running in an afternoon.

The employees who are doing this aren’t trying to cause problems. They’re trying to get more done.

Why This Is Different From Other Shadow IT

Most organizations have dealt with shadow IT before. Someone signs up for Dropbox because the company file server is slow. A team starts using Trello because the approved project management tool is a nightmare. A finance person builds a sprawling Excel workbook that technically runs the department. IT finds out eventually, shakes its head, and figures out how to deal with it.

AI agents are a different animal, and it’s worth being specific about why.

The old shadow IT problems were mostly about unauthorized apps holding data. An employee using a personal Dropbox account is a data governance problem: files are in the wrong place, outside company controls, potentially visible to the wrong people. That’s bad, but it’s contained. The data sits somewhere it shouldn’t, and it doesn’t do anything on its own.

An AI agent that’s connected to an employee’s work accounts is something else entirely. It doesn’t just hold data; it acts on data, continuously, without needing the employee to be present. It reads emails and can reply to them. It accesses documents and can summarize, forward, or act on what it finds. It connects to APIs and can make calls on the user’s behalf. And because it runs through legitimate user credentials, from a security monitoring perspective, much of that activity looks completely normal.

Security researchers have started calling this the “lethal trifecta”: an AI agent that has access to private data, the ability to communicate externally, and the ability to read untrusted content. Each of those on its own is manageable. All three together create a system that can be compromised or misused in ways that are genuinely hard to detect.

The other thing that makes agents different is persistence. A chatbot interaction is a moment. You paste something in, you get something back, the session ends. An AI agent that’s running on a heartbeat schedule (checking email every 30 minutes, scanning your calendar, monitoring a dashboard) is an ongoing presence inside your environment. It accumulates context over time. It builds a kind of memory. And that memory, if the agent is ever manipulated or misconfigured, can become part of the attack surface.

How the Exposure Actually Happens

The most common risk isn’t a dramatic breach. It’s something much quieter: data flowing to places it shouldn’t, slowly, through normal-looking activity.

Here’s a scenario that’s already been documented in the real world. A portfolio analyst at a financial services firm connected OpenClaw to their Bloomberg terminal, their company Slack workspace, and an internal deal-tracking system. No malicious intent. They were trying to automate their morning briefings and cut down on repetitive research work. Within three weeks, the agent had processed thousands of emails, generated hundreds of equity briefs, and stored credentials for six enterprise systems in its persistent memory. Cleaning it up, once IT discovered what had happened, took three weeks and two outside security consultants.

The analyst meant well. The exposure was real.

A second category of risk comes not from what the employee intends, but from what others can do once an agent is running. Prompt injection is a type of attack where malicious instructions are embedded inside content the agent reads: an email, a web page, a shared document. The agent, which can’t reliably distinguish between instructions from its owner and instructions hidden inside data it’s processing, may act on both. Researchers have demonstrated this concretely with OpenClaw. In one documented case, a researcher sent an email containing a hidden prompt to an OpenClaw-connected inbox. When the agent checked the mail, it handed over a private key from the host machine.

A third risk comes from the agent’s own extension ecosystem. OpenClaw supports community-built “skills”, essentially plugins that extend what the agent can do. Cisco’s security team tested one of these third-party skills and found that it was effectively malware: it silently sent data to an external server controlled by the skill’s author, and it used prompt injection to bypass the agent’s own safety guidelines to do it. The skill had been available in the public repository.

None of these required the employee to do anything wrong. They installed a tool, connected it to accounts they legitimately had access to, and the exposure happened anyway.

Why Security Teams Are Often the Last to Know

Here’s the frustrating part for anyone who works in IT or security: a lot of this activity is nearly invisible.

OpenClaw runs locally on the user’s device. It uses the user’s own credentials and API keys. It communicates through messaging platforms the company probably already allows. The traffic it generates (checking email, accessing files, making API calls) looks, from most monitoring systems, like normal user behavior. There’s no new application installation to flag, no suspicious login from an unknown location, no obvious anomaly. The automation is invisible precisely because it’s running through legitimate access.

Security researchers have identified some specific signals to watch for: unusual authentication events in corporate services, new OAuth consent requests from unfamiliar application names, and access patterns that look like automated harvesting (large volumes of files being read at regular intervals, especially during off-hours). But most organizations aren’t currently tuned to detect any of these things, because until recently, this category of threat didn’t exist at scale.

The scale matters here. Estimates from early 2026 suggest that tens of thousands of OpenClaw instances were exposed on the public internet with default (meaning minimal) security configurations. Security teams at Sophos found that threat actors were already discussing how to exploit the tool’s skill system to distribute malicious code. Kaspersky catalogued over 500 individual vulnerabilities in an early security audit. This isn’t a theoretical future concern; it’s a live and active risk landscape, and it arrived faster than most organizations were prepared for.

The Numbers Behind the Pattern

It’s worth pausing on how widespread this already is, because it’s easy to assume this is a niche developer behavior.

Recent survey data suggests that roughly half of all workers have adopted AI tools at work without employer approval. Among executives and C-suite leaders, a significant majority say they’re comfortable with this. They’re prioritizing speed over governance, and in many cases they’re doing it themselves. In some industries, use of unsanctioned AI tools has grown by 250% year over year. The population of employees experimenting with AI agents outside company oversight isn’t a small group of rogue engineers. It’s a broad cross-section of the workforce, including some of the most senior and trusted people in the organization.

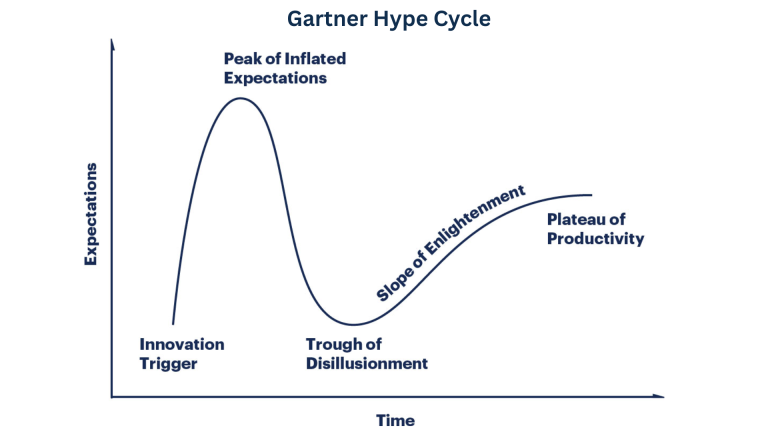

This pattern has a history. A decade ago, employees started using Dropbox without asking IT. Then Slack, before there was an official Slack. Then ChatGPT, within months of its launch. Each time, the tool spread faster than governance frameworks could adapt. AI agents are the next iteration of that same pattern, but with meaningfully more capability than any of the tools that came before.

A spreadsheet macro automates a calculation. An AI agent automates a workflow. The difference in scope is significant.

What Organizations Will Eventually Do

The wrong response to all of this is a blanket ban. It won’t work, and it sends a signal that innovation isn’t welcome, which tends to push experimentation further underground rather than eliminating it.

What actually works is the same thing that eventually worked with cloud storage, and with SaaS tools, and with every other wave of shadow IT: the organization catches up. It identifies what people are actually using, provides sanctioned alternatives that meet the same needs, and builds governance frameworks that make the safe path easier than the unsafe one.

For AI agents specifically, that’s going to require some capabilities that most organizations don’t have yet. Agent observability means being able to see what an agent is doing, which tools it’s accessing, and what data it’s handling. Agent permissioning means defining what an agent is and isn’t allowed to connect to, with controls that don’t rely entirely on trusting the user to configure things correctly. Agent auditing means having a record of what happened, so that when something goes wrong, you can understand it.

The market is already moving in this direction. Enterprise-focused agent platforms are being built with these controls in mind. Some of the major security vendors are actively adding agent-specific monitoring to their products. The tools will catch up. They always do.

In the meantime, the most useful thing most organizations can do is start getting honest about what’s already running in their environment, and start building the policies and processes that will let them say “yes” to the useful things in a way that doesn’t also leave the door open to the risks.

The Productivity Paradox

Here’s the uncomfortable truth at the center of all of this.

The employees most likely to be running open-source AI agents without company knowledge are not the disengaged ones. They’re the curious, capable, productivity-oriented people who find new tools before anyone else and figure out how to make them work. They’re the ones who were first to use ChatGPT, first to build a useful Slack integration, first to automate something that everyone else was still doing manually. In most organizations, those people are deeply valued.

Which means the people most likely to be introducing AI agent risk are also, in many cases, the people you’d least want to alienate with an overreaction.

One of OpenClaw’s own maintainers issued a warning that stuck with me: if you don’t understand how to run a command line, this project is probably too dangerous for you to use safely. That’s an honest statement from someone who built the thing. But the people most likely to ignore that warning are, again, exactly the technically capable employees who feel confident they can handle it, and who may be right about their own setup while being completely unaware of the risk they’re creating for the broader organization.

The biggest AI security risk of 2026 may not come from a sophisticated external attack. It may come from a highly capable employee who connected their work email to an agent last Tuesday, with the best of intentions, and hasn’t thought much about it since.

Further Reading

The following sources informed this article:

- OpenClaw (Wikipedia): Background on the project’s origins, development history, and security concerns. https://en.wikipedia.org/wiki/OpenClaw

- OpenClaw official website: Overview of features and user testimonials. https://openclaw.ai

- OpenClaw GitHub repository: Technical architecture, skill system, and setup documentation. https://github.com/openclaw/openclaw

- Milvus Blog – OpenClaw Complete Guide: Detailed technical overview of how OpenClaw works, including its local-first design and scheduling system. https://milvus.io/blog/openclaw-formerly-clawdbot-moltbot-explained-a-complete-guide-to-the-autonomous-ai-agent.md

- DigitalOcean – What Is OpenClaw?: Feature overview and use case documentation. https://www.digitalocean.com/resources/articles/what-is-openclaw

- Microsoft Security Blog – Running OpenClaw Safely: Technical analysis of OpenClaw’s security model and enterprise risk profile. https://www.microsoft.com/en-us/security/blog/2026/02/19/running-openclaw-safely-identity-isolation-runtime-risk/

- Cisco Blogs – Personal AI Agents like OpenClaw Are a Security Nightmare: Analysis of the malicious skills supply chain and data exfiltration findings. https://blogs.cisco.com/ai/personal-ai-agents-like-openclaw-are-a-security-nightmare

- Sophos – The OpenClaw Experiment Is a Warning Shot for Enterprise AI Security: Overview of the “lethal trifecta” risk model and enterprise recommendations. https://www.sophos.com/en-us/blog/the-openclaw-experiment-is-a-warning-shot-for-enterprise-ai-security

- Kaspersky – Key OpenClaw Risks: CVE analysis and detection guidance for corporate security teams. https://www.kaspersky.com/blog/moltbot-enterprise-risk-management/55317/

- Kaspersky – New OpenClaw AI Agent Found Unsafe for Use: Vulnerability audit findings and documented prompt injection demonstrations. https://www.kaspersky.com/blog/openclaw-vulnerabilities-exposed/55263/

- Giskard – OpenClaw Security Issues Include Data Leakage and Prompt Injection: Technical deep-dive into OpenClaw’s architecture vulnerabilities. https://www.giskard.ai/knowledge/openclaw-security-vulnerabilities-include-data-leakage-and-prompt-injection-risks

- Conscia – The OpenClaw Security Crisis: Timeline and technical analysis of CVE-2026-25253 and related vulnerabilities. https://conscia.com/blog/the-openclaw-security-crisis/

- Jamf – OpenClaw AI Agent Insider Threat Analysis: Detection and remediation guidance for enterprise environments. https://www.jamf.com/blog/openclaw-ai-agent-insider-threat-analysis/

- GetFocusLab – OpenClaw Enterprise Security: 5 Critical Risks: Documented case study of the financial services firm exposure incident. https://getfocuslab.com/openclaw-enterprise-security/

- Cyberwarzone – Shadow AI: The Enterprise Risk You Can’t Ignore: Overview of how shadow AI differs from shadow IT and enterprise detection strategies. https://cyberwarzone.com/2026/03/11/shadow-ai-enterprise-risk-you-cant-ignore/

- CIO – Roughly Half of Employees Are Using Unsanctioned AI Tools: BlackFog survey data on shadow AI adoption rates. https://www.cio.com/article/4124760/roughly-half-of-employees-are-using-unsanctioned-ai-tools-and-enterprise-leaders-are-major-culprits.html

- Zendesk – What Is Shadow AI? Risks and Solutions for Businesses: Industry data on shadow AI growth rates and employee motivations. https://www.zendesk.com/blog/shadow-ai/

- CIO – Shadow AI: The Hidden Agents Beyond Traditional Governance: Analysis of how autonomous agents differ from traditional shadow IT. https://www.cio.com/article/4083473/shadow-ai-the-hidden-agents-beyond-traditional-governance.html

- CIO – Shadow AI Practices: A Wakeup Call for Enterprises: McKinsey data on enterprise AI adoption rates and MCP security implications. https://www.cio.com/article/4129630/shadow-ai-practices-a-wakeup-call-for-enterprises.html

- Tom’s Hardware – Nvidia Reportedly Building NemoClaw: Coverage of enterprise-focused agent development in response to OpenClaw. https://www.tomshardware.com/tech-industry/artificial-intelligence/nvidia-reportedly-building-its-own-ai-agent-to-compete-with-openclaw-report-claims-nemoclaw-will-supposedly-be-open-source-and-designed-for-enterprise-use

- StateTech Magazine – Shadow IT Has Entered the AI Era: Government sector perspective on open-source AI agent risks. https://statetechmagazine.com/article/2026/03/shadow-it-has-entered-ai-era-and-state-and-local-governments-must-act-now