AI Makes Everyone Look Experienced. That’s the Problem.

I do code assessments for teams coding with AI. By the time I’m looking at the code, they’ve already done their own review. They’ve audited it themselves, they’ve had AI audit it, sometimes they’ve had AI audit the AI’s audit. They’re confident it’s clean.

I always find major problems.

And here’s the part that matters: the problems aren’t AI problems. They’re judgment problems. Things experience catches and tools don’t, no matter how many passes you run.

That gap, between what the code looks like and what the person who shipped it actually understands, is the thing I want to talk about. Because it’s not really a story about AI. It’s a story about how we used to know who was good at this job, and why that system just quietly stopped working.

The thing that just broke

For a long time, the way you evaluated an engineer was simple enough that nobody had to think about it. You looked at their code. You looked at their PRs. You looked at the systems they designed and the bugs they fixed and the features they shipped. The visible artifact (the thing they produced) was a reasonably reliable signal of the capability underneath it. Not perfect, but close enough that optimizing for the artifact didn’t catastrophically diverge from optimizing for the underlying skill.

The whole apparatus of how we evaluate technical work assumes this coupling holds. Performance reviews, promotion criteria, interviews, the way you decide who to trust with the hard problem. All of it rests on the assumption that what someone produces tells you something reliable about what they can do.

AI breaks the coupling.

When AI can produce plausible code cheaply, the code stops being a reliable signal of the underlying capability that produced it. A junior with AI can ship code that looks like senior code. The PR can look clean. The design doc can read well. The tests can pass. And none of it tells you whether real judgment was exercised, whether the person could have caught the subtle thing that was wrong, whether they’re building actual capability or just operating tools that produce capability-shaped output.

This is not a small shift. It’s a shift in the physics of how value gets produced and recognized in technical work.

Why this hits you harder than it hits your manager

Most of the writing about this problem is aimed at managers and companies. How will we evaluate engineers now? How will we know who to promote? Those are real questions, but they’re not the most pressing version of the problem, and they’re not the version that affects you most directly.

The version that affects you most directly is this: AI makes you look more experienced than you are, and the person it fools first is you.

Your manager’s confusion about how to evaluate you is a problem you’ll feel eventually, in some performance cycle a year from now. Your own confusion about what you actually know is a problem you’re feeling right now, even if you haven’t named it yet. Every signal you’ve ever used to gauge your own growth (I shipped this, I solved that, my code passed review) is now decoupled from the underlying thing those signals used to represent. The artifact is real. It works. It got merged. But the line from “I produced this” to “I am capable of producing this” got cut, and most people haven’t noticed.

This is the trap. Not that AI makes you look better than you are to other people, though it does. The dangerous version is that AI makes you look better than you are to yourself, and the gap compounds silently.

You can spend two years shipping code that works, getting good reviews, hitting your goals, and end up with the judgment of someone two years less experienced than your title suggests. Nothing in your day-to-day will tell you this is happening. The feedback signals all look good. You’ll only find out when you hit a problem that AI can’t solve for you, and you’ll discover that the foundation you thought you’d been building wasn’t there.

What I see in the assessments

When I’m reviewing AI-assisted code, the team is almost always genuinely competent. These aren’t bad engineers. They’re not lazy. They’ve usually run the code through multiple review passes, including AI-driven ones, and they have good reasons to believe it’s solid.

The problems I find aren’t the kind AI catches. They’re things like: the architecture is locally clean but globally wrong for what this system is actually going to need in eighteen months. The error handling looks comprehensive but misses the failure mode that actually matters in production. The abstractions are reasonable in isolation but compose badly with the rest of the codebase. The data model handles every case the team thought of and breaks on the case they didn’t.

These are judgment problems. They require seeing the code in context, knowing what kind of system this is becoming, having watched similar decisions play out badly somewhere else five years ago. AI is genuinely useful for a lot of things, but it can’t bring experience it doesn’t have, and it can’t audit for problems that require knowing what this particular system needs to become.

What strikes me most is that the teams I work with often did everything right by the standards of how we used to evaluate code review. They read the code carefully. They asked good questions. They got AI to look for issues. By the old playbook, this should have been enough. It isn’t, and the reason it isn’t is the same reason this whole shift matters: the artifact-level review can’t catch the things that exist underneath the artifact.

What replaces the old path

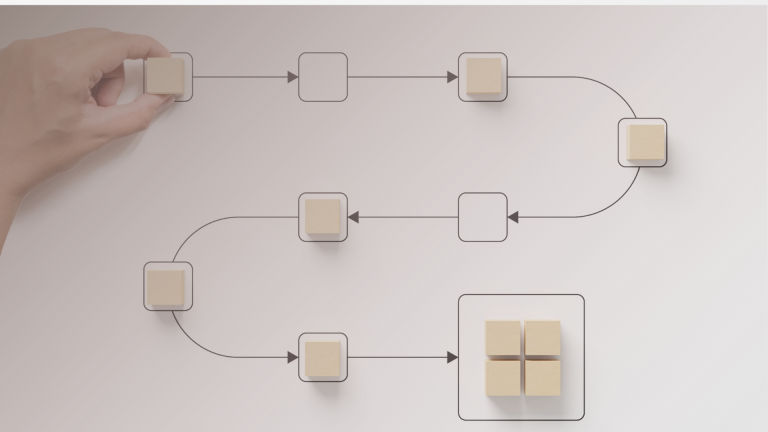

The old path to becoming a genuinely experienced engineer ran through producing a lot of code, getting it reviewed by people who knew more than you, fixing the things they caught, and slowly absorbing the patterns until you started catching them yourself. The artifact was the vehicle for the learning. Producing the thing built the capability to produce the thing.

AI lets you skip large parts of that. You can produce the artifact without doing most of the work that used to build the underlying capability. This is great for shipping velocity and terrible for formation, and the bill comes due later.

The path that replaces it isn’t anti-AI. Refusing to use the tool is a bad answer; the tool is genuinely useful and the people using it well are going to outpace the people who refuse. But you have to be deliberate about doing the work that AI is happy to let you skip. Concretely:

Understand the code before you ship it, not after. Not in a “I read it and it looks fine” way, but in a “I could defend every meaningful decision in this code from first principles” way. If you can’t, that’s the gap, and the gap is the work.

Sit with hard problems before reaching for the tool. The minutes or hours you spend stuck on something, before AI bails you out, are where the actual capability gets built. Skipping that step feels efficient and is genuinely costly in a way that won’t show up for a while.

Build the mental model of why a design works, not just the ability to generate designs that work. AI can produce a reasonable architecture for most problems you’ll encounter. It cannot give you the judgment to know which parts of that architecture will hold up and which won’t, and that judgment only comes from having built the model yourself.

None of this is a productivity tip. It’s a recognition that the formation steps that used to be forced on you (because you couldn’t produce the artifact without doing them) are now optional, and you have to choose them on purpose.

The reframe

Here’s the part where this stops being a warning and starts being useful.

The coupling between artifact and capability was always lossy. Even before AI, plenty of people produced impressive-looking work without the underlying judgment, and plenty of genuinely capable people produced work that didn’t telegraph their skill. AI didn’t introduce the gap. It just made the gap impossible to ignore.

The people who figure out how to build real capability in this environment are going to have an advantage that compounds. Most of their peers will be optimizing for artifacts that no longer reliably signal what they used to signal, and over time, the gap between “looks experienced” and “is experienced” will become one of the most important things separating one engineer from another. The market will eventually figure this out. The companies that figure it out first will have something rare: a workforce that can actually do the things their job titles claim they can do.

The threat isn’t AI. The threat is using AI in a way that lets you skip the thing that was actually making you valuable, and not noticing for long enough that the skip becomes irreversible.

The question to ask yourself isn’t whether your code is good. It’s whether you’d know if it weren’t.